Write now: we need your support for children’s data protection

National Pupil Database / May 6, 2018

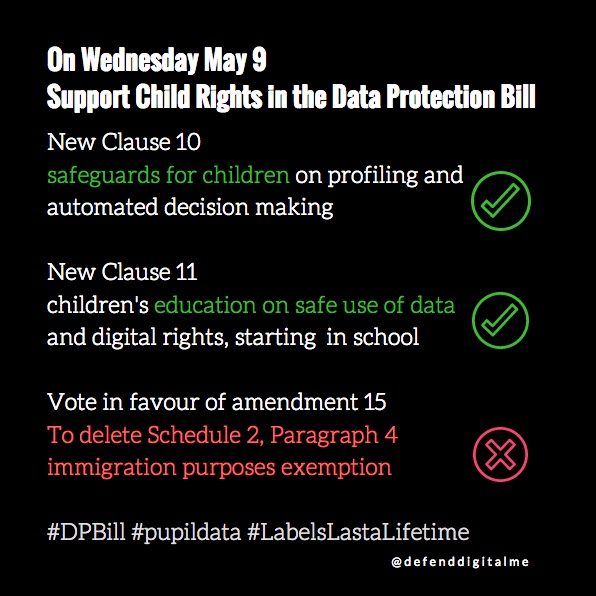

Members of the House of Commons will vote this Wednesday on significant changes in the Data Protection Bill. Please write to your MP today and ask them to support better protection of children’s rights in Bill.

- Make sure you put: “Urgent: Data Protection Bill – Report Stage on 9 May” in the subject title

- You should tell them you are a constituent and your reason for asking them to support key amendments.

- You must personalise your message, for example to say why you feel passionate about this issue or what aspects particularly concern you.

- Tell them, you share defenddigitalme’s key concerns relating to children in the Data Protection Bill — including that the Secretary of State for Digital, Culture, Media and Sport only considers the UN Convention on the Rights of the Child worthy of having regard for in Clause 182, rather than an obligation to meet. Advocate for support of amendments New Clause 10 and New Clause 11. Support amendment 15, that would remove the immigration’ exemption at Schedule 2, Part 1, Paragraph 4.

You might want to use our tips sheet to help you, in your own words. [download tips on the DP Bill. pdf]

Advocate for New Clause 10 (NC10)

Better safeguards and Oversight on automated decision-making where it affects a child

ACTION

Please vote in support of the amendment proposed as new clause 10 (NC10), to provide safeguards and oversight of automated decision-making where it affects a child.

WHY

The GDPR states that child appropriate safeguards are necessary under Articles 13(2)(f), and Articles 21-23 on the rights to be told about the consequences of automated profiling and decision-making without human intervention. Recital 71 makes clear such decisions should not routinely apply to a child. How to apply exemptions and its boundaries are not at all clear. The Bill so far, fails to set out those required safeguards for automated decision making and profiling of children. We are failing to give children the safeguards they need, or suppliers the clear and consistent standards they should maintain, to uphold human rights in a world of machine learning.

WHAT DIFFERENCE WOULD IT MAKE?

New Clause 10 would require the data controller to undertake a Data Protection Impact Assessment and make it available to the Information Commissioner’s Office where they expect to take a significant decision based solely on automated processing which may concern a child. This supports GDPR Recital 71, it should not apply routinely to a child and the Working Party 29 recommendations.

The Commissioner would produce and publish a list of safeguards to be applied by data controllers where any significant decision based solely on automated processing may concern a child.

These steps would help bring clarity, consistency and confidence on rights and responsibilities for children and parents, and to schools and their suppliers, in the more broad and general use of profiling in particular regards targeted marketing, and offer more detail than Bill Clause 123 which is only for data from services ‘likely to be accessed by a child‘ not regards data processing ‘about a child’ in which their data are processed without consent.

BACKGROUND

For common case studies of children’s profiling in education in England please refer to those on pages 3-5 of our submission to the WP29.

As Liam Byrne said, at Committee Stage calling for a Statutory Code of Practice in Education, Lord Knight, had also raised the concerns that, “Schools routinely use commercial apps for things such as recording behaviour, profiling children, cashless payments, reporting.”

Victoria Atkins MP replied, “Work is going on already. The Department for Education has a programme of advice,” and also noted, “Everyone is very alive to the issue of protecting children and their data.”

Advice alone is not enough to safeguard children from unscrupulous suppliers and the volume of profiling technology already in schools today. Staff receive no standard data protection or data privacy training as part of basic teacher training, despite the vast amount of data collected in education. The Department for Education Cloud Guidance for apps providers does not even ban advertising in apps or websites children have to use.

Thousands of companies harvest children’s names, photographs and profile online activity in school and during their homework, throughout their lifetime, from platforms [like Google G-Suite] in the classroom, or edTech apps, cashless canteen systems using biometrics, behavioural monitoring software, even classroom seating plans now use machine profiling, and AI.

- The Times and Daily Mail both recently reported ClassDojo is harvesting and profiling data on how British schoolchildren behave.

- Companies are seeking to incorporate ever more biometric processing in the classroom.

- Data are not only used by commercial companies but children are profiled as individuals and as communities, across the UK from national pupil databases in different ways that school staff never see, and don’t communicate.

- National Pupil Data are now linked in England with Police National Computer Data and used in criminology research and interventions in schools. What could possibly go wrong in future with this kind of predictive profiling and its applications? Oversight and safeguards could make these processes more trustworthy and safe.

Please ask your MP to vote in support of the amendment proposed as new clause 10, to provide safeguards and oversight of automated decision-making where it affects a child.

Advocate FOR New Clause 11 (NC11)

Digital Rights and Data Education for children

This new clause would enable the Secretary of State to require that personal information safety be taught as a mandatory part of the national PSHE curriculum.

ACTION: Please support the principles in amendment New Clause 11 (NC11).

WHY: Children like everyone else, will flourish better with better digital understanding. Many children, just like many staff in education, have no idea what they’re signing up to when they start using apps and other online platforms, tools and social media. Children do need better digital understanding through education, but it doesn’t mean companies can push back the responsibility for safe data use to them. People feel disempowered, and we need to restore a sense of agency. the Children’s Commissioner believes that we are failing in our fundamental responsibility as adults to give children the tools to be agents of their own lives. Why not start in school?

Government will no doubt say that to allow teachers the flexibility to deliver high-quality, non-statutory PSHE they consider it unnecessary to provide new standardised frameworks or programmes of study, and teachers are best placed to understand the needs of their pupils and do not need additional central prescription. But Teachers have no standard data protection or privacy training as part of their own training. This is a major gap in their own and children’s understanding. We need a complete culture change on children’s privacy rights.

BACKGROUND:

Children’s data in education are transferred in bulk to third party commercial suppliers, made available for surveillance, used in all sorts of ways they and their parents do not understand and have no control or choice over. In our #StateOfData2018 poll of parents in England of children age 5-18 carried out by Survation:

- a quarter of parents said they did not know if their children had been signed up to apps and online services by their school.

- Only 50% think they have control of their child’s digital footprint in school.

- 69% of parents did not know that the Department for Education gives their child’s identifying pupil data away from the National Pupil Database. [Download summary highlights .pdf]

Please ask your MP to vote in support of the amendment proposed as new clause 11, personal information safety be taught as a mandatory part of the national PSHE curriculum.

SUPPORT AMENDMENT 15 to REMOVE paragraph 4 of Schedule 2

Oppose the immigration clause

ACTION: Please vote in support of amendment 15, that would delete paragraph 4 of Schedule 2.

WHY: Victoria Atkins MP at the Committee Stage [on March 13, col 72], said, that national pupil data is specifically a dataset that it would used for, to find people using children’s national school records.

WHAT DIFFERENCE WOULD IT MAKE?: Read the Education Policy Institute Bill evidence comments on the risks and harms to children and to educational research from the ‘immigration’ exemption at Schedule 2, Part 1 Paragraph 4. Children have human rights to privacy and education. These must not be compromised by using data collected for one purpose, for another more punitive.

BACKGROUND: Use of national pupil data for immigration enforcement began in secret in 2015. It was discovered during the 2016 expansion of the school census in which no one told the public or schools, that “(once collected) nationality” was to be handed over along with other personal data from the National Pupil Database. The data handovers continue monthly.

Please ask your MP to vote in support of amendment 15, to remove Schedule 2, Part 1, Para 4 from the Bill.

What about a Code of Practice?

At Committee Stage, the government rejected a proposal for a Statutory Code of Practice in education.

Thank you to everyone who wrote to the Committee, especially those whose submissions were not accepted into formal evidence because they arrived before the published closing date of March 27, but after the Committee had closed early on the 22nd March.

We will continue to work towards its introduction under article 57 of the GDPR, without needing any direction from the Secretary of State. Such a Code of Practice is necessary and an “entirely sensible” proposal.

You can show your support and share our everyday campaign work through social media using #LabelsLastaLifetime.

Thank you again. Now please write, today!