Ofqual consultation: How results 2021 could look for exam candidates

Campaign / January 26, 2021

This Friday January 29th, is the closing date for the Ofqual/DfE consultation on the 2021 exam replacements. It asks questions for how GCSE, AS and A level grades will be awarded and a separate consultation for VTQs and other general qualifications in 2021. The Chartered College of Teaching among many others have shared some of their responses.

We will not comment in detail outside our remit, for example on the topics of the proposal to have exams set that would happen because exams cannot happen, or the appeals process, or how a grade is calculated. But we want to publish our view on how the process of ‘how a grade is calculated’ should be explained to candidates.

The consultation has nearly 70 questions but fails to suggest an answer the most important one: How will candidates trust that a grade is accurate and fair?

Although the Ofqual blog describes, “How 2021 could look for students and learners” there is no practical grasp yet evident, what students need and expect. The 2020 fall out will be lasting in the lives of thousands of affected students. It may also have a lasting effect in shaping their attitudes towards AI, after their experience of what the PM called ‘a mutant algorithm‘ and it all cost not one, but two Ofqual executives their positions.

What will be different this year and going forwards, so that students won’t need to stand outside Gavin Williamson’s office in Westminster, in order to be heard? What key things failed concretely last year, and what key things concretely will be done to avoid them this year?

To trust that a grade is accurate and fair, candidates need to have access to understandable information that has gone into its calculation. We propose that every candidate should receive a Personal Exam Grade Explainer (shortened to P.E.G.E, that we’ll call “Peggy”). An individual report for each result. Now and in future, Peggy should help transparently explain how *my* grade was calculated, not provide generic descriptions of the process.

Every candidate is already given a results slip today with some personal details and the grade. Using the same delivery mechanism, the report would also explain how each grade was reached for the candidate, making the data inputs used, transparent and understandable. Some candidates are still children aged under 18, and today they could argue that bodies do not meet their existing obligations under UK data protection law and the GDPR Articles 13 and 14 right to be informed. “Given that children merit specific protection, any information and communication, where processing is addressed to a child, should be in such a clear and plain language that the child can easily understand.”

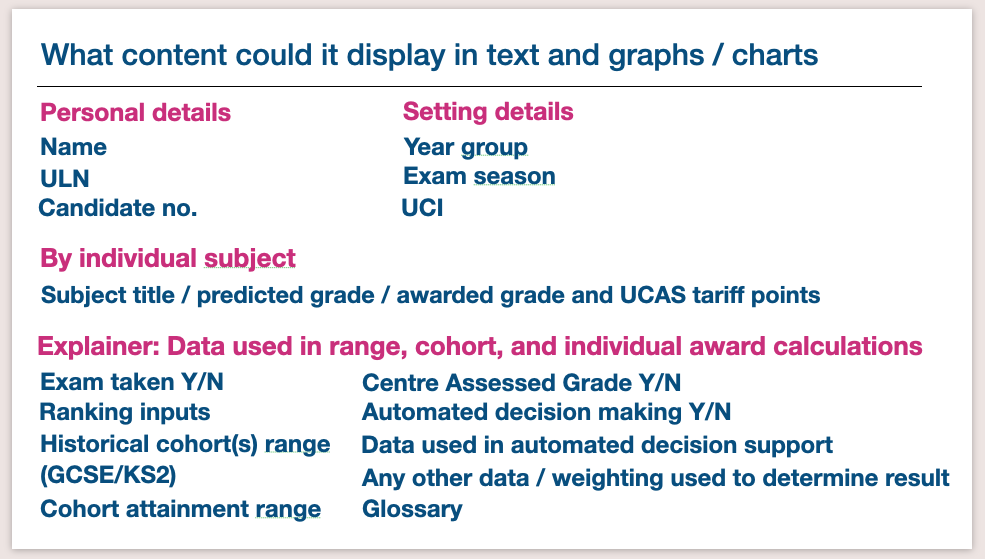

It should be simple, short, using text and graphics. Peggy might include a chart of the subject grade boundaries, a table of how comparable outcomes were determined, the centre‘s grade range and explain the effect of historic cohort data from previous results. Cohort Key Stage 2 SATs results or GCSEs used in the model, as well as any individual test or competency scores could be included. It would set out how each of those sets of data affected your individual result, as well as offering a glossary and explain how the appeals and resit process both work.

Peggy would help exam boards meet their lawful obligations to explain their personal data processing and any automated decision making, help educational settings meet obligations to explain how personal data are used and ensure data accuracy. It should help expose any input errors more quickly. It would better support schools and candidates to understand how close they were to a grade boundary, supporting a faster decision process for candidates on whether it likely a grade would change after an appeal and remark, or on re-taking the exam. And overall, increased transparency should restore trust in the awarding process.

It will take collaboration and cooperation across Exam Boards, perhaps with with the JCQ and Federation of Awarding Bodies. It needs designed with a broad range of input and thorough testing. It might not start with every type of exam. This year, depending on the outcomes from the consultation, it might have fewer inputs than usual. But candidates should be offered a consistent standard of explainability and model in its design, and should not need to navigate different formats just because their exams have been spread across a range of providers.

We suggested it already in 2020.

We think Ofqual should begin work with urgency, and welcome others’ views.

Fig 1. Proposed high level content for the Peggy report, a Personalised Exam Grade Explainer.

Thumbnail featured image credit: BBC